Introduction to Canonical Deletion

by Derrick Stolee

Today, I discuss McKay’s isomorph-free generation technique, commonly called canonical deletion, or canonical construction path. The technique guarantees that every unlabeled object of a desired property will be visited exactly once. The word visited means that a labeled object in the isomorphism class is generated and tested for the given property and possibly used to extend to other objects. Also, objects that do not satisfy the property are visited at most once because we may be able to use pruning to avoid generating objects that do not lead to solutions.

The canonical deletion technique is so called from its use of reversing the augmentation process in order to guarantee there is exactly one path of augmentations from a base object to every unlabeled object. Essentially, a deletion function is defined that selects a part of the combinatorial object to remove, and this function is invariant up to isomorphism. Then, when augmenting an object this augmentation is compared to the canonical deletion of the resulting object to check if this is the “correct” way to build the larger object. If not, the augmentation is rejected and the larger object is not visited. By following the canonical deletion from any unlabeled object, we can reconstruct the unique sequence of augmentations that leads from a base object to that unlabeled object. Further, this process is entirely local: it depends only on the current object and current position within the search tree. This allows the process to be effective even when parallelizing across computation nodes without needing any communication between nodes, unlike some other isomorph-free generation methods which require keeping a list of previously visited objects. Along the way, we will use a consistent example of generating a certain class of graphs to make the discussion more concrete.

Disclaimer: This post was adapted from a background chapter of my Ph.D. thesis. It was written to provide a different perspective on the very abstract nature of the generation algorithm. There are some very subtle points that can be missed easily in McKay’s original paper.

Objects, Augmentations, and Deletions

Suppose we are searching for combinatorial objects from a family of labeled objects. Under the appropriate definition of isomorphism for those objects, let

be the isomorphism relation and

be the family of unlabeled objects: the equivalence classes under

. Let

be a property, and we wish to generate all objects

in

where

. We shall assume the property

is invariant under isomorphism (

): for all unlabeled objects

and labeled objects

,

. In this case, we can define

for an unlabeled object

to be equal to

for any labeled object

.

Example: Let be the set of graphs of order

. Then

is the family of unlabeled graphs where the standard relation of isomorphism (

) is used between graphs. The property

could be

if and only if

is 4-regular and

has chromatic number three. One nice aspect of the property

is that all induced subgraphs of 4-regular 3-chromatic graphs have maximum degree at most 4 and chromatic number at most 3.

We require a set of base objects and an augmentation. Let

be a set of labeled objects where every pair

has

.

For a labeled object , the augmentation defines a set

of augmentations. An object in

should specify enough information to determine how to augment

to create a new object

. Let

be the set of deletions, which specify the information to determine how to delete something from

to create a smaller object

.

There must be a bijection from augmentations to deletions. In some sense, this bijection should be natural, in that an augmentation

maps to

if and only if performing the augmentation

on

creates the object

and performing the deletion

on

results in

.

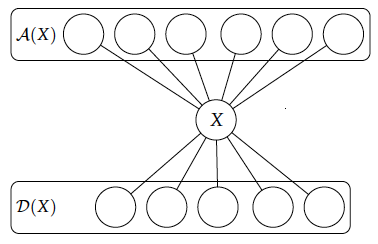

We shall consider our objects as being built up, so the set of augmented objects can be called the above objects while the deleted objects

are the downward objects. (The sets

and

were originally called lower objects

and upper objects

by McKay. In addition to the problem that the letters

and

are already used for labeled and unlabeled objects, the notation for

and

is confusing because McKay never explicitly states which direction is “up”!.) Figure 1 provides a visualization of these sets with respect to a labeled object

.

Example: Suppose we wish to enumerate all connected graphs of order . One augmentation step is given by adding a vertex and specifying its neighborhood. Since every connected graph contains an edge, we can start from

as a base object (so

).

Every vertex augmentation is given by specifying the neighborhood of the new vertex in the graph, so every augmentation is a pair with

. To guarantee connectedness, we can assume that

. To delete a vertex, we must only specify the vertex, so we can let pairs

with

be a deletion leading to the graph

. From this, let

, and

. The natural bijection

can be defined as mapping a graph, subset pair

to the graph, vertex pair

where the graph

has vertex set

and

has edges given by

Figure 2 shows an example graph

of order three with all deletions and augmentations specified. The augmentations have white circles representing the vertices in

while the deletions have red circles representing the vertex

to delete. Further, the deletions,

, are connected to the augmentations of the two graphs of order two and the augmentations,

, are connected to the deletions of the graphs of order four.

These definitions of augmentations and deletions are based on labeled objects. In order to generate at most one labeled representative of every unlabeled object, we must consider what isomorphism means in this context.

Augmentations and Orbits

For isomorphism concerns, we shall assume that for an unlabeled object , any two labeled objects

have a bijection

and

such that the following statements hold:

- For all

, the object

such that

and object

such that

are isomorphic (

).

- For all

, the object

such that

and the object

such that

are isomorphic (

).

This allows us to define the augmented and deleted objects and

for unlabeled objects

as well.

Example: If two graphs, and

, are isomorphic via a bijection

, then an augmentation

maps to

where

. Further, a deletion

maps directly to

. Therefore, any two isomorphic graphs

and

have a bijection

.

Now, we wish to remove isomorphic duplicates, so the most natural first step is to remove duplicate augmentations. That is, if we are augmenting an object via two augmentations

, we should make sure that

is not isomorphic to

or else

and

will be isomorphic. These types of decisions can be made using the automorphism group of the object

.

Example: When augmenting a graph by adding a vertex, if there is an automorphism

such that

maps a set

to a set

, then the augmentations

and

create isomorphic graphs

and

. Therefore, we should compute all set orbits and select exactly one representative from each orbit. [Since this calculation seems inefficient when the sets can have arbitrary size (i.e.

is not bounded), McKay’s paper has a workaround to avoid duplications without sacrificing efficiency.]

It is an unfortunate fact that these local orbit calculations are not enough to guarantee that we shall not create duplicate objects. There are several reasons, including:

- Augmentations that are not in orbit can create isomorphic objects. See Figure 3.a, where two single-vertex neighborhoods are not in orbit but augmenting by either creates the same unlabeled graph. Figure 3.b shows this ambiguity in the reverse direction, where two deletions from different orbits result in the same graph.

- There may be two internally disjoint sequences of non-isomorphic augmentations which generate the same unlabeled object. Figure 4 shows two different sequences of three vertex augmentations starting from

that generate the same unlabeled object. What is most important is that these paths are internally disjoint with respect to unlabeled objects. Thus, no amount of verifying that isomorphic duplicates are avoided at the next level (or some constant number of levels), eventually some objects will be repeated.

These concerns demonstrate that a “bottom up” approach to avoiding duplicate graphs is not satisfactory. The canonical deletion technique avoids this issue by reversing the process: it makes sure that every unlabeled graph has a unique sequence of deletions that leads to a base object.

Canonical Labelings

In order to avoid the previously mentioned pitfalls that lead to multiple labeled representatives of an isomorphism class being visited in the search, we must utilize a powerful tool that allows us to compute invariants.

For a family of labeled objects whose unlabeled objects are

, a canonical labeling is a function

where for every unlabeled object

and every pair of labeled objects

, the two labeled objects

and

are equal.

In this sense, the labeling function will \textit{relabel} an object

to create another labeled object

of the same isomorphism class. The important behavior is that

is the same labeled object for the entire isomorphism class, so the object

is invariant for unlabeled objects.

Example: When the family of labeled objects is a collection of undirected (or directed) graphs, then a canonical labeling

can be selected to be the graph

with lexicographically-least adjacency matrix. This choice of canonical labeling fits the definition, but is difficult to compute. Instead, McKay’s

nauty library was designed to efficiently compute canonical labelings. The algorithm defines the map , and is not easily described. See the survey paper by Hartke and Radcliffe for a full description of the

nauty algorithm.

Canonical Deletions

Finally, we are able to describe the canonical deletion. This deletion is the most fundamental concern to this search technique. A canonical deletion function is a map such that

and for any two isomorphic objects

we have isomorphism of their deletions:

.

Example: When building graphs by vertex augmentations, a graph has deletion set

. Thus, we need only select a single vertex up to isomorphism. By computing the canonical labeling

, we find an ordering of

that is invariant up to isomorphism. Therefore, let

be the vertex which maps to the smallest-index vertex in

when using an isomorphism from

to

. Since the isomorphism used above is arbitrary, this only determines the canonical deletion up to vertex orbits, but this is still appropriately invariant under isomorphism.

WARNING: When restricting to connected graphs, we must be sure that the deletion we select leads to a connected graph. Therefore, the canonical deletion should select the smallest-index vertex that is not a cut vertex.

Given access to a canonical deletion, we can now describe the full canonical deletion algorithm.

CanonicalDeletion()

if Prune()

// There are no solutions “above” this object.

return

if IsSolution()

// This object is a solution.

Output

ifOrder()

// This object is too large to augment.

return

for all augmentations , up to isomorphism

// Convert the augmentation into a deletion.

.

// Find the labeled object that corresponds to that deletion.

.

// Compute that object’s canonical deletion.

.

// Test if the current augmentation corresponds to that canonical deletion.

if

// Visit the object .

call CanonicalDeletion()

return

The reason this algorithm is correct is due to the fact that following the canonical deletions in reverse allows you to reconstruct the unique sequence of augmentations that correspond to those deletions (just in reverse order).

Given a labeled object , the deletion sequence from

is a sequence

,

,

,

,

of labeled objects such that

- The first object

is equal to

.

- For

,

. That is, the canonical deletion from

corresponds to an augmentation from

.

- The last object

is a base object:

.

Thus, the objects that are visited by the CanonicalDeletion algorithm are exactly those with deletion sequences where Prune(

) never holds and

. In the call to CanonicalDeletion(

), only the augmentation

that has

will succeed in generating

, leading to the call CanonicalDeletion(

). Since we are restricting to a single representative of the augmentation orbits, this leads to exactly one generation of

.

It is very important that we make the distinction that for an augmentation we have

for an augmented object

and not simply that

is isomorphic to the object resulting from performing the deletion

on

. This is due to the fact that multiple non-isomorphic deletions may lead to the same unlabeled object. Recall that Figure 3.b gave an example of such an ambiguity.

Figure 5 presents an example of the complicated network that may exist between the augmentations and deletions of combinatorial objects, but that the canonical deletion selects a subtree structure to this network. Specifically, the canonical deletions are presented as thick lines and there is exactly one path from a given object to the base object.

Example: The algorithm GraphCanonicalDeletion is a specific implementation of the canonical deletion algorithm for generating graphs by vertex augmentations. It restricts to graphs with maximum degree four and chromatic number at most three.

GraphCanonicalDeletion()

if or

// There are no solutions with as an induced subgraph.

return

if and

and

// This graph is a solution.

Output

return

if

// This graph is too big to extend

return

for all orbits of non-empty sets

with

Let be any representative.

// Create the augmented graph.

.

Compute that object’s canonical deletion.

.

// Test if the current augmentation corresponds to that canonical deletion.

if and

are in the same

-orbit

Visit the graph .

call GraphCanonicalDeletion()

return

Efficiency Considerations

Based on my experience in creating specific implementations of the canonical deletion algorithm, I contribute a few suggested methods for writing more efficient software.

Deletion by filtering

Calling nauty is an expensive computation, so it should be avoided whenever possible. However, we cannot exactly compute the canonical deletion without it, but we can sometimes determine that our current augmentation does not correspond to the canonical deletion without using nauty. Therefore, I instead create a method IsCanonical(), where

is the augmented graph and

is the augmentation I used to create

. Then, the method can return

at the earliest time that it detects this is NOT the canonical deletion. This process is then helped by a staged selection of canonical deletion:

- Start with an easy invariant metric, such as minimum degree of a vertex. This restricts the possible deletions very quickly in most cases.

- Define a sequence of more complicated invariant metrics, such as minimizing the sum of the squares of all the neighbor degrees. Such invariants can remove even more choices without being overly costly to compute.

- If all previous restrictions on the choice of canonical deletion did not prove that the current augmentation is not canonical, we have two options. We can check if the list of feasible deletions that fit the previous invariants has size exactly one. If so, then we know without a doubt that this deletion is canonical. Otherwise, we need to select a deletion using a canonical labeling and then check if the current augmentation is in orbit with that canonical deletion. Both of these checks can be done using a single call to

nauty.

Reduced augmentation count

In the previous suggestion, we restricted the choice of canonical deletion to a simple invariant minimization, such as minimizing the vertex degree. Making such a restriction as part of your canonical deletion can reduce the number of augmentations you attempt, for instance by only augmenting vertices of degree at most .

Pruning in deletion

Again, since calling nauty is probably the most computationally expensive subroutine in this algorithm, it may be useful to check if the augmented graph should be pruned even before finishing the canonical deletion. This may depend on how complicated your Prune() method is, but if Prune(

) holds, there is no reason to visit that graph and hence no reason to compute a canonical labeling.

Skipping augmentation orbit calculation

For some augmentations, it may be difficult to completely compute the orbits of augmentations. Vertex augmentations is such an example, because there are many possible neighborhood sets. It may be more beneficial to ignore the automorphism calculation and instead just attempt every augmentation. For every successful augmentation, store a canonical labeling of the augmented object. Then, the objects generated from the current object are visited if and only if the augmentation corresponds to the canonical deletion and that augmented object is not isomorphic to any previous augmentation. By storing a list of visited objects at a given node, we are using the canonical labelings locally, which can be very efficient. See McKay’s original paper for more details about this strategy.

Experiment with the augmentation step

Depending on what type of objects you are generating, there may be something special about their structure that you can exploit in the augmentation step. Observe that in a triangle-free graph every vertex neighborhood is an independent set. McKay provides an example of generating triangle-free graphs by making the vertex augmentations only use independent sets. More radical experimentation of augmentations will be discussed in later posts.

References

S. G. Hartke and A. Radcliffe. Mckay’s canonical graph labeling algorithm. In Communicating Mathematics, 479, 99–111. American Mathematical Society, 2009.

B. D. McKay. Isomorph-free exhaustive generation. J. Algorithms, 26(2):306–324, 1998.

B. D. McKay. nauty user’s guide (version 2.4). Dept. Computer Science, Austral. Nat. Univ., 2006.

Great post.

There are some $-signs floating around somewhere, which was not converted to LaTeX, I think you should be able to find them all by just search for “$”.

Thanks for the heads-up. I thought I caught them all, but I’ll be sure to check more carefully next time.